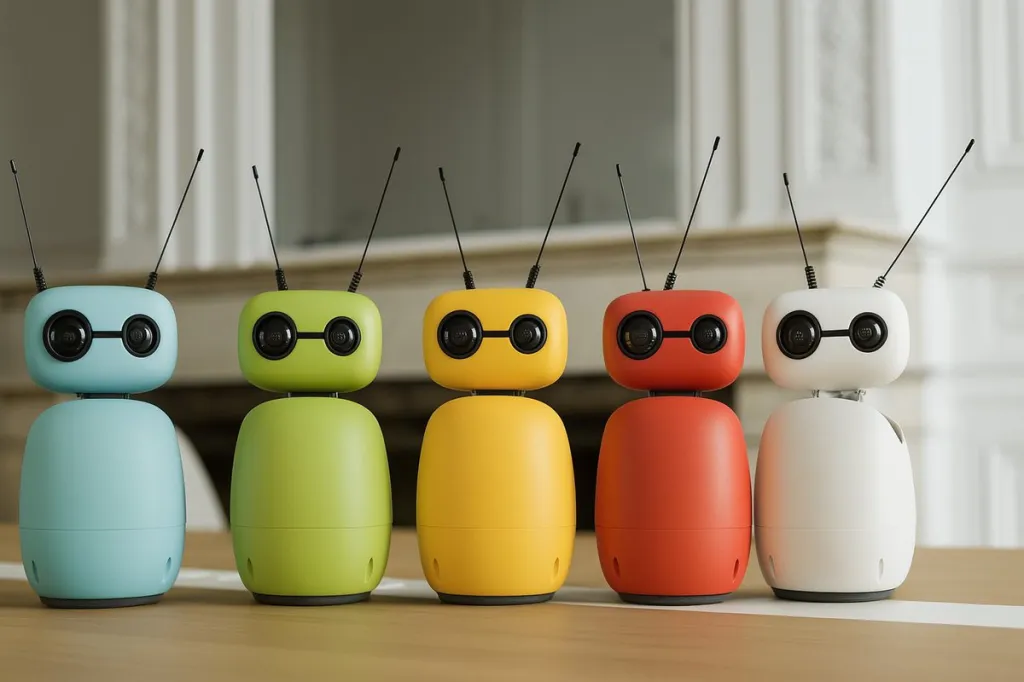

Affordable Programmable Robot

The Reachy Mini, a collaborative project between Hugging Face and Pollen Robotics, has quickly become one of the most significant releases in the “Embodied AI” space in 2025. Often described as the “Raspberry Pi of robotics,” it aims to do for humanoid interaction what the Pi did for computing: make it affordable, hackable, and community-driven.

Overview: What is Reachy Mini?

Unlike its $70,000 predecessor (Reachy 2), the Reachy Mini is a desktop-sized “pseudo-humanoid.” It lacks arms and legs, focusing entirely on expressive head movement, vision, and audio interaction. It is designed as a development platform for AI researchers, educators, and hobbyists to test LLMs (Large Language Models) and computer vision in a physical form.

The Hardware: Two Flavors

The robot is sold as a DIY kit (assembly takes about 2 hours) and comes in two primary versions:

| Feature | Reachy Mini Lite | Reachy Mini (Wireless) |

| Price | $299 | $449 |

| Brain | Your PC/Mac (USB Connection) | Onboard Raspberry Pi 4 |

| Connectivity | Wired (USB) | Wi-Fi & Battery Powered |

| Sensors | Camera, 4 Mics, Speaker | Camera, 4 Mics, Speaker, IMU |

| Movement | 6-DoF Head + Torso Rotation | 6-DoF Head + Torso Rotation |

Key Strengths

1. Unmatched Expressivity

The standout feature is the Orbita-inspired neck joint. With 6 degrees of freedom (6-DoF), the head can tilt, pan, and roll with fluid, organic motion. When combined with the motorized antennas that act like eyebrows or ears, the robot can convey complex “emotions”—curiosity, sadness, or excitement—that make it feel far more “alive” than a static smart speaker.

2. The Hugging Face Ecosystem

Since Hugging Face acquired Pollen Robotics in early 2025, the integration is seamless.

LeRobot Library: You can easily download pre-trained models for face tracking, voice recognition, and “personality” directly from the Hugging Face Hub.

Plug-and-Play Apps: It ships with ~15 ready-to-use behaviors, allowing you to have a conversation with the robot using GPT-4o or Llama 3 almost immediately after assembly.

3. True Open Source

Almost everything—from the Python SDK to the 3D-printed chassis files—is open source. This makes it an incredible tool for schools and developers who want to understand the “guts” of robotics without the “black box” proprietary software common in consumer robots.

The Limitations

No Manipulation: The biggest drawback for many is the lack of arms. Despite the name “Reachy,” this version cannot actually reach for or grab anything. It is a “social” robot, not a “utility” robot.

DIY Complexity: While the kit is marketed as “easy to assemble,” it still requires patience. Dealing with small ribbons and 3D-printed tolerances can be frustrating for absolute beginners.

Processing Power (Wireless Version): The Raspberry Pi 4 in the $449 version struggles with heavy local AI inference. For complex vision tasks or local LLMs, you’ll likely still need to offload processing to a more powerful desktop or the cloud.

Summary

The Reachy Mini is the best entry point into “Embodied AI” currently on the market. It isn’t a replacement for a household assistant, but it is a masterclass in Human-Robot Interaction (HRI). If you want a desk companion that you can program to “recognize” you, react to your mood, and grow with the community, it is phenomenal value at $299.